AI Workflow Bottlenecks: Where Productivity Gains Go to Die

AI speeds up individual work, then bottlenecks kill the gains. Learn where workflow design breaks and what to redesign first.

Most companies bought AI licenses, gave everyone access, and expected transformation. What they got was 200 people doing the same work slightly faster, generating more output than their downstream processes absorbed.

Individual productivity gains from AI do not become company-level performance gains automatically. They get absorbed by coordination overhead, approval layers, and handoff points that existed before AI arrived.

Engineers move faster with AI coding tools. QA becomes a bottleneck because review cycles do not change. Product managers write the same specification documents. Approval chains stay intact. Faster individuals inside a system designed for slower ones creates congestion, not velocity.

This is the gap most leaders need to close. The question is not "how do we make our people more productive with AI?" The question is "how do we redesign the way work flows so AI gains compound instead of dissipate?"

Here are four areas where that redesign needs to happen.

1. Stop optimizing individual productivity. Start redesigning workflows.

Map your current process from idea to production. Not the version on the wiki that nobody updates. The real one. Trace a feature or deliverable from the moment someone says "we should build this" to the moment it reaches a customer.

You will find three to five handoff points where work stalls. Specification reviews. Design approvals. Code review queues. Staging environment access. Release sign-offs. These handoff points were designed for a pace of work that no longer matches reality. When AI-assisted engineers produce work two to three times faster, the same bottlenecks become the constraint on total throughput.

The fix is not to speed up the bottlenecks with more AI. The fix is to eliminate handoff points that no longer add value, or restructure them to operate at the new pace. For a 50-person company, this might mean collapsing a three-stage review process into a single automated quality gate with human exception handling. For a 200-person company, it might mean reorganizing teams around outcomes instead of functions so fewer cross-team handoffs are required.

Do this before buying more AI licenses. Adding speed upstream of a bottleneck makes the bottleneck worse.

2. Shift investment from who builds it to who validates it.

When AI generates most of the implementation, the highest-value human skill becomes defining what "correct" looks like. This is the single largest mindset shift for leaders to make.

The value of AI in your organization is directly proportional to how well you codify correctness. If your quality standards live in someone's head, AI will produce plausible-looking output that fails in production. If your standards are explicit, measurable, and embedded in automated workflows, AI becomes reliable.

This has real implications for hiring and team structure. The traditional ratio of builders to testers was roughly 4:1 or 5:1. In an AI-first organization, that ratio inverts. You need fewer people writing code and more people defining acceptance criteria, building test automation, and validating outputs against business requirements.

Companies extracting real value from AI agents have acceptance criteria that are machine-readable, not buried in Slack threads and verbal conversations. Their QA teams have evolved from manual testers into system architects who build validation frameworks that run continuously.

The practical step: pick your highest-volume workflow. Write down every condition that separates good output from bad output. Make those conditions specific enough that a machine evaluates them without ambiguity. If you struggle to do this, you have found the bottleneck. The AI is not the problem. The ambiguity in your quality definition is.

3. Address your agent security model now.

This is the most overlooked area in AI adoption, and the one with the highest cost of getting wrong late.

Role-based access control was built for humans who log in and authenticate. It assumes a person sits at a keyboard, passes a multi-factor authentication check, and operates within defined business hours. Autonomous AI agents break every one of those assumptions. They run scheduled tasks at 2 AM. They access multiple systems in sequence. They act without a human present.

Most companies have not built an identity model for agents. They work around it by running agents under a human's credentials, which creates audit trail problems, compliance exposure, and a security model that cannot distinguish between a person and a bot acting on their behalf.

The fix requires three things. First, attribute-based access control that grants permissions based on context: what data, what action, what time, what risk level, rather than static role assignments. Second, agent identity frameworks where every autonomous process has its own identity, its own credential lifecycle, and its own audit trail. Third, a non-negotiable rule: no anonymous agents, and no agent impersonating a human identity.

Companies that address this early avoid a painful retrofit later. Companies that wait face it during an audit, a breach, or a compliance review. All three are more expensive than doing the work now.

4. Look for new products, not faster versions of old ones.

The framing "AI will make your existing business 10x better" is seductive but misleading. Efficiency gains are real. They are not where the largest value accumulates.

The biggest AI wins for your company will likely come from products and services you cannot offer today, not from doing your current work faster. A professional services firm that uses AI to deliver reports three times faster saves margin. A professional services firm that uses AI to offer continuous monitoring as a subscription service creates a new revenue line.

Pressure-test this in your next strategy session: what would you offer if implementation cost dropped to near zero? The answer points toward products and services that were previously uneconomic. Personalized deliverables at scale. Continuous analysis instead of periodic reports. Custom solutions for customer segments too small to serve profitably before.

If your AI strategy starts and ends with efficiency, you are leaving the largest opportunities on the table.

The structural shift underneath all of this

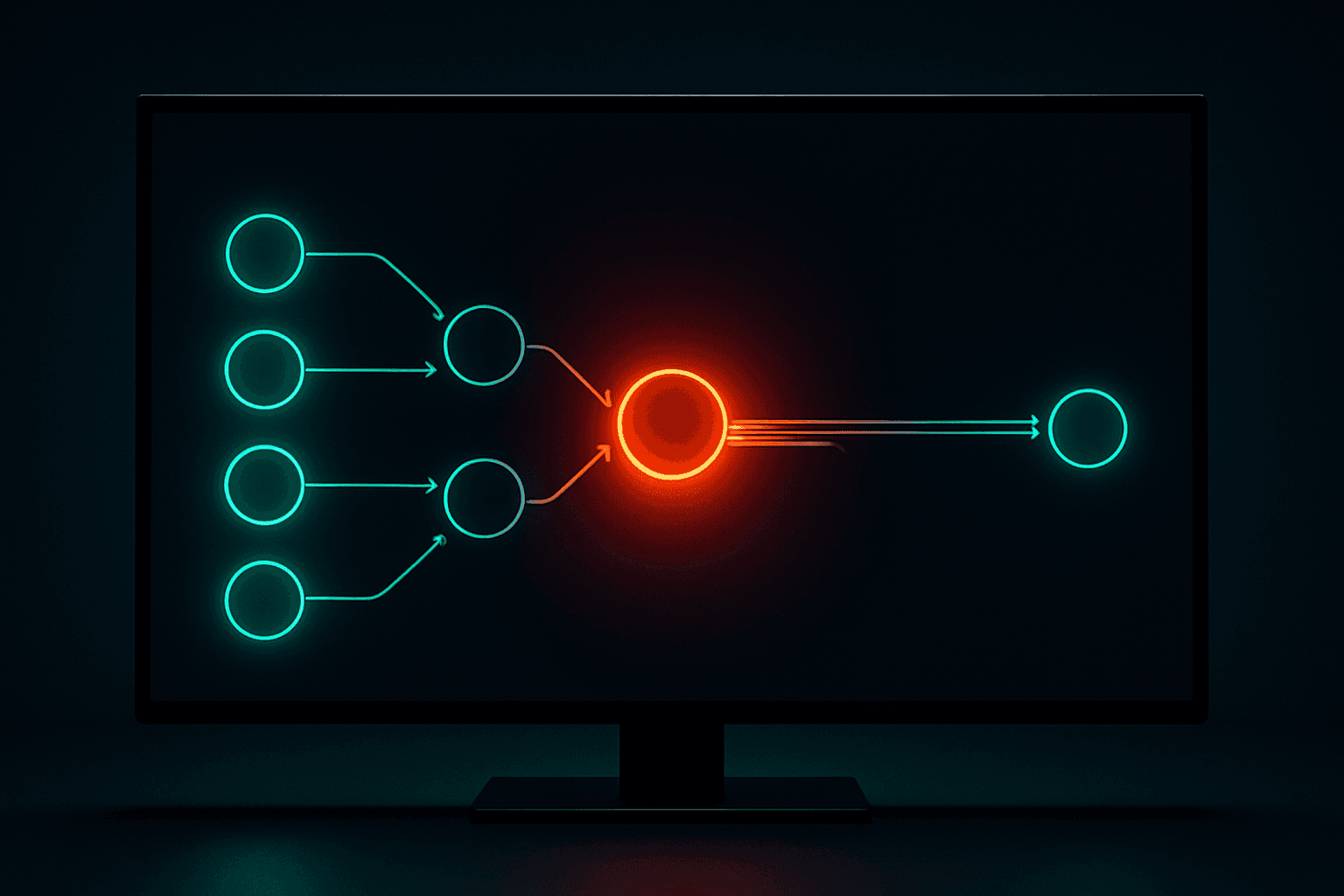

The old model of building software looks like a diamond. A small product team defines work, hands it to a large engineering team, then narrows again through QA and release.

The new model looks like a double funnel. Humans engage deeply at the start, defining intent and exploring options. AI executes the middle. Humans engage deeply again at the end, validating outcomes before anything reaches production.

This is not a workflow tweak. It is a structural inversion of where human effort concentrates. It changes who you hire, how you organize teams, what skills you pay a premium for, and where your competitive advantage accumulates.

For companies running lean, this structural shift is an opportunity with a short window. Your advantage is speed of decision-making. You do not have the bureaucratic layers that slow down larger competitors. A 100-person company redesigns its workflows in a quarter.

If your AI strategy is "give everyone a copilot and wait for the numbers to improve," you are optimizing the wrong layer.

Dooder Digital helps companies redesign work for AI, not bolt AI onto existing work. Start with a free AI workflow audit.

Get the weekly AI brief.

Read by CIOs and ops leaders. One insight per week.