AI Code Governance: Who Is Auditing AI-Generated Code?

AI-generated code is already in production. Learn how technology leaders should audit, review, and govern code written with AI.

Here is the assumption most engineering leaders are working from: once we establish AI coding policy, our engineers will follow it. Meanwhile, the actual situation in most engineering teams is that AI adoption is already at full speed, the policy either does not exist or was written six months ago and ignored, and nobody is checking what the AI is producing.

This is not a technology problem. It is a governance problem. And it is sitting in your production environment right now.

What is AI code governance?

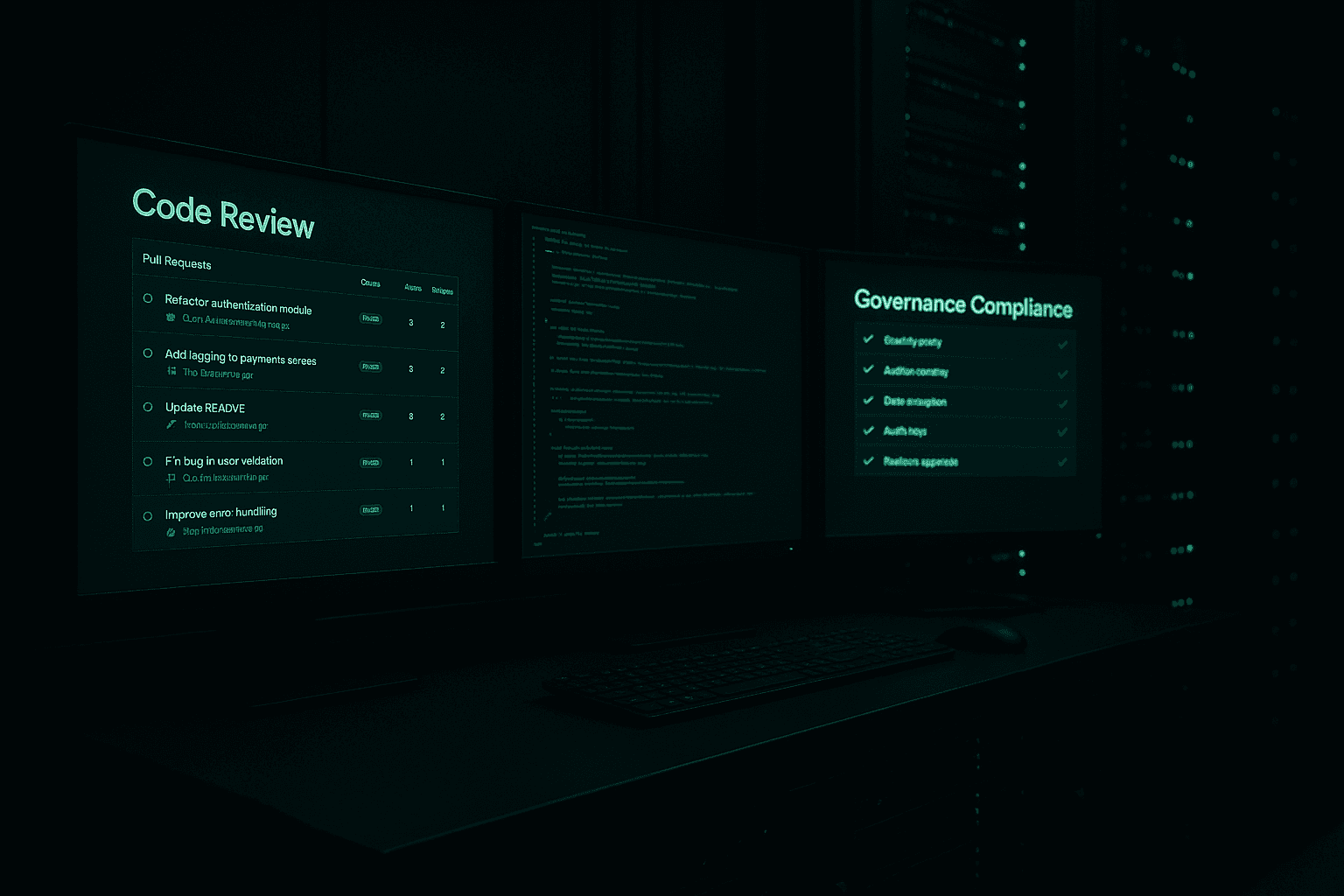

AI code governance is the system of policy, review standards, automated scanning, and accountability controls that governs how AI-generated code gets written, reviewed, and shipped. It matters because AI output is already reaching production faster than most review processes were built to handle.

The Assumption Gap

According to the Sonar State of Code 2026 report, 42% of all code in circulation is AI-generated or AI-assisted. That number did not emerge from a company-wide rollout with guardrails and training. It emerged from individual engineers doing what engineers do: finding tools that help them work faster and using them.

Your engineers are not waiting for permission. They are using GitHub Copilot, Cursor, Claude, and ChatGPT on their own machines, in their own workflows, right now. Some are doing it well. Most are doing it without any defined standard for what "well" means in your organization.

The question is not whether AI is in your codebase. It is already there. The question is who is accountable for what it produced.

What the Data Shows

The security research on AI-generated code is not encouraging.

A January 2026 study by Tenzai tested 15 production applications. Every single one contained Server-Side Request Forgery vulnerabilities. Not one had working CSRF protection. Researchers found 69 total vulnerabilities across those 15 apps.

Escape.tech analyzed 5,600 production applications and found over 2,000 vulnerabilities. Among them: more than 400 exposed secrets and 175 personal data exposures, including medical records.

CodeRabbit's research found that AI-generated code contains 2.74 times more cross-site scripting vulnerabilities than human-written code.

These are not edge cases from small teams with no process. These are production systems at real organizations. The pattern is consistent: AI-generated code ships fast, looks clean on the surface, and contains security flaws that code review was not set up to catch at this volume.

Speed Without Oversight Is Not Efficiency

Here is a dynamic that makes this worse. Faros AI data shows that AI adoption increases PR size by 154% and review time by 91%. Engineers are writing more code per pull request. Reviewers are spending more time on each one. The net effect is that the volume of code going into your codebase has increased, and the review process is struggling to keep up with it.

More code, slower reviews, and higher defect rates per line of AI output. That combination should concern every engineering leader at a company where software is tied to revenue, compliance, or customer data.

The AI writes the code, but the responsibility stays with you. If a vulnerability in an AI-generated endpoint exposes customer data, your organization owns that breach. The tool your engineer used does not carry that liability. You do.

What the Best Engineering Leaders Are Doing

Engineering leaders who are ahead of this problem are not banning AI or slowing down development. They are building a governance layer that travels with the AI output from the moment it is generated.

Addy Osmani, Engineering Director at Google Chrome, advocates for spec-first development with AI. Engineers write a detailed specification before prompting the AI. The AI works from the spec. The output gets reviewed against the spec. This gives reviewers something concrete to check against instead of free-form AI output.

Some teams are using rule files, such as CLAUDE.md files committed directly to the repository, that tell the AI what the team's security standards, coding conventions, and prohibited patterns are. The AI applies these rules during generation. The rules also give reviewers a documented standard to enforce.

Cross-model review is another emerging practice. A second AI model reviews the output of the first, with explicit prompting to look for security flaws, logic errors, and compliance issues. This does not replace human review. It surfaces issues before human reviewers see the code, which means reviewers spend their time on harder problems instead of catching obvious vulnerabilities.

Automated gates at the pull request level complete the picture. Static analysis tools, secret detection, and SAST scanning run on every PR before a human reviews it. The gate fails if the code does not meet the standard. Engineers fix the issue before it reaches a reviewer.

Together these practices form a layer of accountability that did not exist when AI adoption started. They do not slow down development. They make the speed of AI-assisted development sustainable.

What This Means for CIOs Right Now

If you are a CIO or CTO, you need to answer three questions about your engineering organization today.

First, do you have a documented AI coding policy that your engineers know about and follow? Not a policy that was sent in an email. A policy that is enforced, reviewed, and tied to your security standards.

Second, do you have tooling at the PR level that catches what human reviewers miss? Static analysis, secret detection, and automated security scanning are table stakes at this point. Without them, AI-assisted development introduces risk faster than manual auditing catches it.

Third, is someone in your organization accountable for the quality and security of AI-generated code? Not accountable for blocking AI adoption. Accountable for ensuring that what the AI produces meets your standards before it ships.

Policy without tooling is aspiration. Tooling without accountability is infrastructure that nobody uses. All three need to be in place and connected to each other.

Most companies have none of the three. Some have one. Fewer still have all three working together. The gap between where most organizations are and where they need to be is significant, and it is growing as AI adoption accelerates.

FAQ: What should engineering leaders do first?

What is the first step for AI code governance?

The first step is to define a real standard for AI-assisted code, then enforce it through pull-request gates, review rules, and clear ownership.

Why is policy alone not enough?

Policy alone fails because engineers move faster than unenforced documents. The controls have to live inside the workflow.

What should teams scan automatically?

Teams should automatically scan for security flaws, exposed secrets, risky patterns, and violations of coding standards before human review starts.

Assess Your AI Governance Posture

If you are not certain how much AI-generated code is in your production systems, or whether your current review process is equipped to handle it, that uncertainty is itself a finding.

Dooder Digital works with CIOs and CTOs to assess their AI governance posture, identify specific gaps in policy, tooling, and accountability, and build a governance layer that keeps pace with the speed of AI-assisted development.

Book a Briefing at dooderdigital.com/schedule-call to start with a focused assessment of where your organization stands.

Get the weekly AI brief.

Read by CIOs and ops leaders. One insight per week.