Why Your AI Agents Keep Failing (It's Not the Model)

AI deployment failures almost always come down to architecture, not the AI itself. Here is what most organizations get wrong and how to fix it.

Your AI agent pilot failed. Leadership is asking why. Your engineers say the model needs more tuning. The vendor says you need more training data.

Both answers are wrong.

Salesforce published a framework in March 2026 titled "8 Design Principles for the Agentic Enterprise." The central argument is worth quoting directly: "Plastering an agent on top of an existing but inadequate architecture won't work." That single sentence explains the majority of failed AI deployments right now.

The model is not the problem. GPT-4, Claude, Gemini are not the weak link. The weak link is the infrastructure underneath them. The data pipelines with no semantic context. The access controls with no expiration logic. The operations team with no way to see what the agent did or why.

If you fix the architecture, the agents work. If you don't, you get what Salesforce describes as "disconnected experiments and wishful thinking."

The Four Layers You Need Before You Deploy Anything

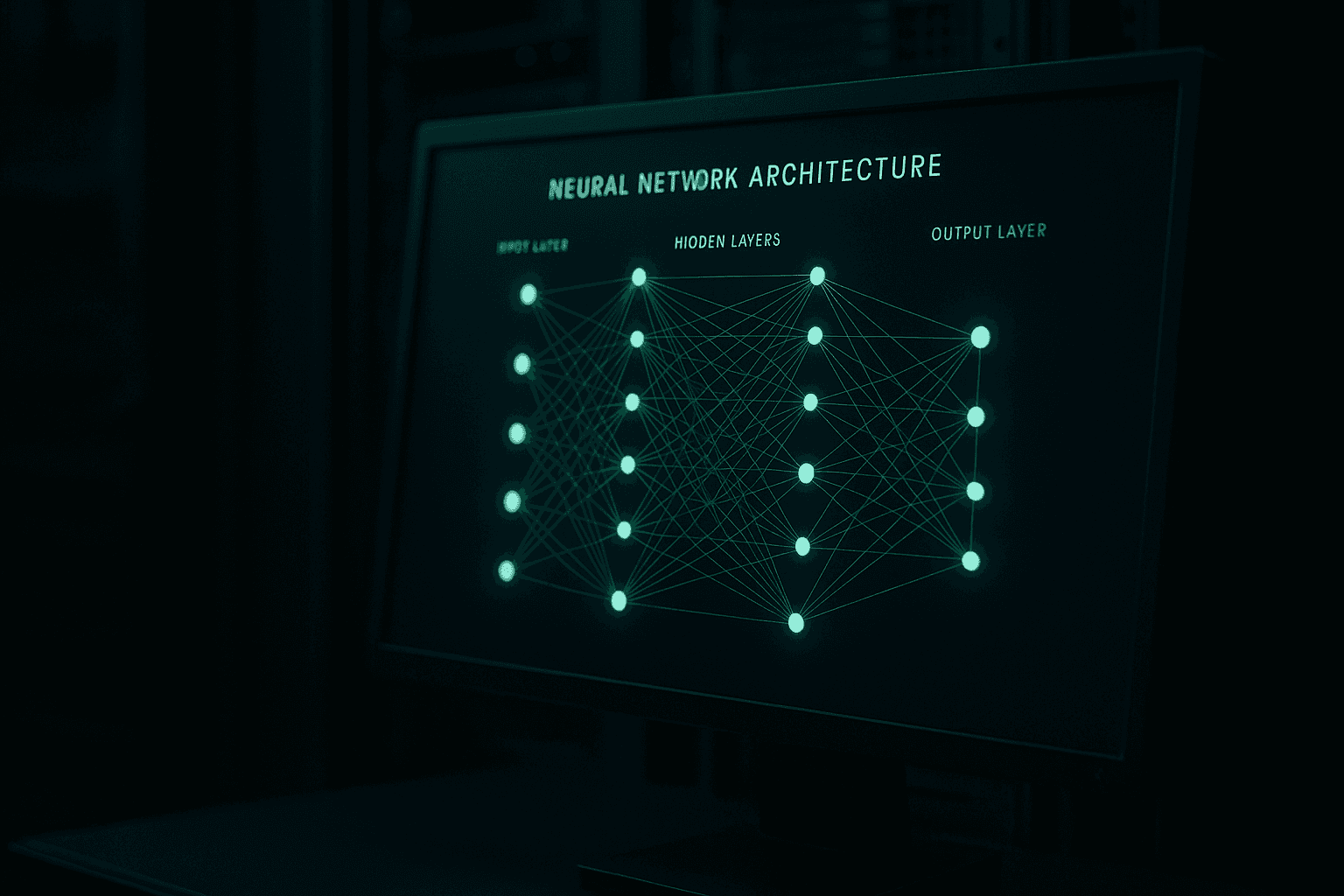

Salesforce organizes agentic architecture into four layers. Get these in place first, then deploy agents.

The system of data is your foundation. Agents need unified data with semantic context, not raw tables. They need to understand what the data means, not only read it.

The system of work is your business applications: ERP, CRM, HRIS. Agents need clean integrations into these systems, not brittle point-to-point connections.

The system of agency is where agents live and operate. This layer handles agent identity, permissions, and coordination between agents.

The system of engagement is the interface layer where people and agents interact. Workflows, approvals, escalations.

Most organizations skip the first two layers and build directly into the third. Agents end up pulling from messy data sources with no context and connecting to business applications through fragile custom code. When something breaks, nobody knows why.

The Three Things Most Teams Skip

Among the eight principles Salesforce outlines, three are almost universally skipped. These three failures account for the majority of broken deployments.

Observability

You need to see what your agents are doing in real time. Not only whether the task completed. What reasoning the agent applied. What data it read. What actions it took. What it decided not to do.

Most organizations deploy agents and then operate them blind. When something goes wrong (wrong customer data written to a CRM, an approval routed incorrectly, a task completed on the wrong record), there is no audit trail. Your team spends hours or days reconstructing what happened.

Unified observability means a single place where you see agent actions, reasoning chains, data accessed, and business outcomes. Without it, you are running operations you cannot inspect and therefore cannot improve.

This is not a nice-to-have for scale. It is table stakes for any agent touching a business-critical process.

Trust and Governance

Agents need identities. Not shared service accounts. Not inherited credentials from the engineer who built the integration. Verifiable agent identities with permissions scoped to specific tasks and time windows.

Here is the governance failure pattern at most companies. An engineer builds an agent, grants it broad access to get the job done, and ships it. Six months later, the engineer is gone. The agent is still running with permissions nobody remembers granting. It has access to systems it no longer needs. Nobody audited it because nobody knew it was there.

The Salesforce framework calls for governance baked in, not bolted on. Permissions expire when the task ends. Every agent has a documented identity and a clear owner. Access gets reviewed on a schedule, not when something breaks.

For a company with compliance obligations (HIPAA, SOX, or any PE-backed company under investor audit scrutiny), this is not optional. An agent with unaudited access to financial data is a liability, not an asset.

Strategic Human Oversight

Salesforce uses an airport security analogy: X-ray everything, manually inspect the suspicious cases. Not every bag. Not no bags.

Most organizations go to one extreme or the other. Either humans approve every agent action, which defeats the purpose of automation, or humans approve nothing, which creates unacceptable risk the first time an agent acts on bad data.

Strategic human oversight means designing intervention points deliberately. Define which action categories trigger human review before an agent executes. Define what the escalation path looks like. Define the criteria for pausing an agent workflow entirely.

This is an architecture decision, not an operational one. It needs to be built into the system, not improvised by the agent.

Open Architecture in Practice

One more principle worth attention: open ecosystems. The Salesforce framework specifically names MCP (Model Context Protocol) and open APIs as requirements for any serious agentic deployment.

The practical implication: do not let a vendor build you an agent deployment you cannot move. Proprietary agent platforms with locked data formats and closed integration layers are a dead end. When you want to change models, add capabilities, or switch vendors, you need portable workflows and open interfaces.

MCP is emerging as the standard protocol for agent-to-tool communication. Building against open APIs and MCP-compatible integrations keeps your architecture flexible. Building against a vendor's proprietary layer gives you a liability.

Where This Leaves You

The organizations winning with AI agents right now are not the ones with the best models. They are the ones who treated architecture as a first-class problem before deploying anything.

They built data layers with semantic context. They scoped agent permissions to specific tasks. They deployed observability before they deployed agents. They defined human oversight criteria before the first workflow ran.

The organizations still struggling are running disconnected pilots against legacy infrastructure, with no governance layer and no visibility into what their agents are doing. They keep changing models. The problem follows them.

As Salesforce puts it: "Without them, you don't have an Agentic Enterprise. You have disconnected experiments and wishful thinking."

Assess Your Agentic Architecture

If your organization has AI agents in production, or is planning to deploy them, Dooder Digital runs a focused architecture readiness assessment for CIOs and CTOs.

We look at your data layer, your governance model, your observability setup, and your human oversight design. We tell you what is missing and what needs to be built before you scale.

Book a Briefing at dooderdigital.com/schedule-call to start with a 30-minute architecture review.

Get the weekly AI brief.

Read by CIOs and ops leaders. One insight per week.