Why Software Rollouts Fail (And How AI Adoption Is Different)

Salesforce. The new ERP. The tool everyone ignores after six weeks. The pattern is always the same. Why AI adoption is different.

You know this story. The company spent six months evaluating Salesforce. They brought in a consultant, customized the fields, migrated the data, and ran an all-hands training session. Ninety days later, the pipeline data is three months out of date. The sales team is still tracking deals in a spreadsheet. When leadership asks why, the answer is always some version of: "The system is too complicated" or "We need more training."

The same story plays out with ERPs that become filing cabinets. Project management tools that have one active user. Ticketing systems that the team routes around. You do not have to have worked in technology to have lived this. Almost every organization has a version of it.

The common assumption is that the tool failed. The tools did not fail. They are working fine at companies similar to yours. The variable is not the software.

The Real Cause

Software rollouts fail because of how they are run, not what is being rolled out. The same tool that collects dust at one company becomes indispensable at another. The difference is almost never the technology. It is the implementation approach, the change management, and what happens in the thirty days after go-live.

When we look at rollouts that did not deliver adoption, four failure modes show up with remarkable consistency.

Four Failure Modes

The tool was chosen by the people least likely to use it. IT evaluates the security architecture. Leadership evaluates the vendor relationship and the price. The people whose daily workflow is about to change are brought in for one demo near the end of the process, after the decision has already been made. They feel no ownership over the tool. They were not asked what problems they needed solved. They are handed a login and expected to be grateful.

Training was one generic session. A one-hour kickoff where an implementation consultant walks through every feature of the system is not training. It is a tour. Effective training is role-specific. It focuses on the exact workflows each team type performs, not the full feature set. It is repeated at thirty and sixty days when people have real questions from real use. Generic, one-time training produces generic, one-time usage.

Nobody owned adoption after go-live. This is the most common failure mode we see. The implementation project had a project manager, a timeline, and a budget. The go-live date was the finish line. Once the system was deployed, the project was considered complete. The team that built it moved on. Nobody was responsible for measuring adoption, identifying friction, or fixing problems that emerged in the first sixty days. The system was left to fend for itself.

The old way of doing things stayed available. This one is subtle but consistent. When people have a choice between a new tool they are still learning and a familiar process they know works, most people choose familiar. If the old spreadsheet is still accessible, if the old inbox workflow is still running in parallel, if there is no point at which the new system is the only option, adoption will stall. Change requires a forcing function. Leaving the escape route open prevents the change from taking hold.

AI Is About to Repeat This Pattern

Every software wave produces the same cycle: hype, investment, disappointment, recalibration. The internet was going to change everything by 2002. CRM was going to transform sales organizations. Digital transformation was going to reinvent the enterprise. The benefits were real. The adoption rates were consistently lower than projected.

AI is in the middle of its hype phase. Companies are spending significant budget evaluating tools, building systems, and making commitments. The implementation quality is mixed. The change management discipline is mostly absent.

In three years, a meaningful number of these AI investments will look exactly like the Salesforce instance nobody uses. The technology will not be the reason. The rollout will be the reason.

What the Companies Getting It Right Do Differently

The organizations that achieve real AI adoption treat the rollout as the actual project. The build is the beginning. The system is done when the team uses it consistently, not when it is deployed.

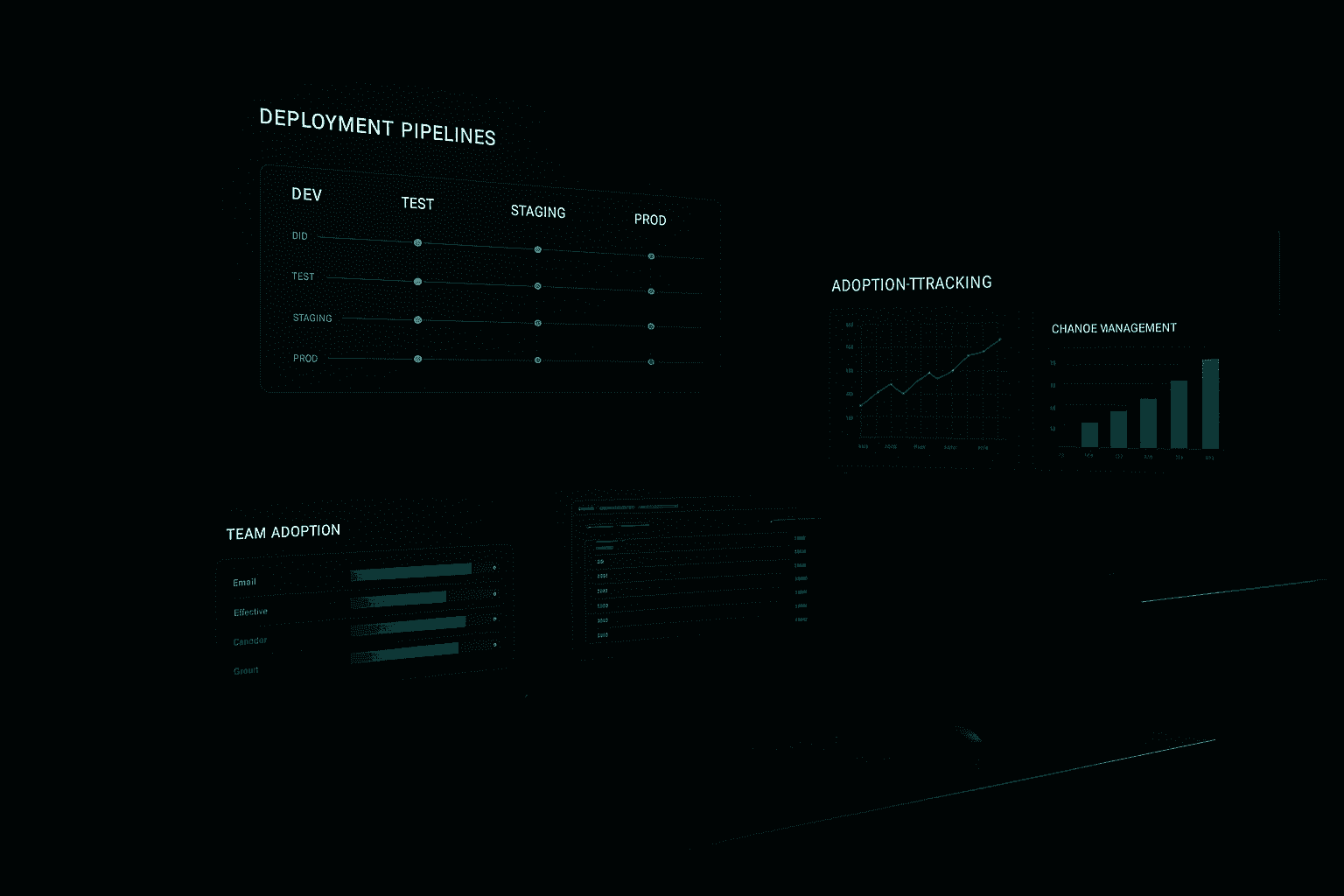

This means measuring usage at thirty, sixty, and ninety days. Not system uptime. Not API call volume. Human usage. Are the people this was built for using it? How often? Where are they dropping off? What is the friction point?

It also means fixing adoption problems before they become culture problems. When a team develops a narrative that a tool does not work, that narrative spreads. It is much harder to change a cultural perception than it is to fix a workflow gap in the first thirty days. The window to intervene is short.

The companies that get AI adoption right also tend to have a named owner for adoption, distinct from the technical owner. The engineer who built the system is not the right person to manage behavior change in the team using it. Those are different skills. Treating them as the same role is a structural mistake.

One Thing to Do Before Your Next Rollout

Before you start evaluating AI tools for your next project, run a thirty-minute working session with the actual users. Ask two questions and record what you hear.

First: What about your current process is most painful? Not what is inefficient in the abstract. What is painful on a daily basis to the person doing the work.

Second: What would make you stop using a new tool within a month? This question surfaces the specific friction points and resistance factors that will kill adoption. People will tell you, if you ask. Most rollouts never ask.

The answers to those two questions will tell you more about what you need to build and how to roll it out than any vendor demo or requirements document.

If you are heading into an AI implementation and want a structured approach to change management that builds adoption from the start, our AI change management framework covers the process in detail.

Get the weekly AI brief.

Read by CIOs and ops leaders. One insight per week.