AI Change Management: Why Most AI Projects Fail After Rollout

Most AI projects fail after rollout, not during evaluation. See the adoption mistakes that stall usage and what to fix first.

Most AI projects do not fail in procurement. They fail six weeks after launch.

A company spends months evaluating vendors, sits through demos, negotiates contracts, and loops in IT. Then it runs one generic training session and calls that a rollout. Six weeks later, usage is flat, the workflow has not changed, and leadership decides AI was overhyped.

The technology was usually fine. The people side was ignored. That is why AI change management matters more than most teams expect.

Why do AI projects fail after rollout?

AI projects usually fail after rollout because stakeholder alignment was weak, training stayed generic, resistance was ignored, and leaders measured installation instead of daily usage. In most failed rollouts, the technology worked. The adoption system did not.

The Four Reasons AI Adoption Fails

When we dig into failed AI projects, the same four issues show up over and over.

1. No stakeholder alignment before the build

The people who will use the tool were not involved in choosing it. Leadership picked something, IT implemented it, and the team was handed a login. That is not buy-in. That is a surprise. People resist surprises, especially when the surprise involves changing how they do their jobs.

Alignment has to happen before a single line of code is written. That means sitting down with the actual users, understanding what they find painful about their current workflow, and getting their input on what a solution should look like. When people feel ownership over a tool, they use it.

2. Generic training instead of role-specific guidance

Most AI training looks like this: a 45-minute webinar showing every feature, followed by a PDF guide, followed by an email with a link to a help center. That is not training. That is a disclaimer.

Effective training is role-specific. A salesperson needs to know how the tool helps them write follow-up emails faster. A project manager needs to know how it helps them summarize status updates. They do not need to know about features that do not apply to them. Generic training produces generic adoption, which means near zero.

3. Resistance that was entirely predictable

Some people will resist AI tools. Not because they are difficult, but because the tool threatens something real: their status, their expertise, their sense of job security. This resistance is predictable. It is mappable before you launch.

We have seen companies go into rollouts blind, then act surprised when their senior account managers push back on an AI tool that automates the thing they have been doing manually for fifteen years. That resistance did not appear out of nowhere. It was sitting right there in the org chart if anyone had looked.

4. Measuring installation, not usage

After rollout, most teams track one metric: did we turn it on? Did everyone get a login? Did IT check the box? They confuse installation with adoption. Those are not the same thing.

Real adoption means people are using the tool in their daily workflow, not logging in only when someone reminds them. If you are not tracking usage 30, 60, and 90 days after launch, you will not know the project is failing until it is too late to course-correct.

What Change Management Looks Like in Practice

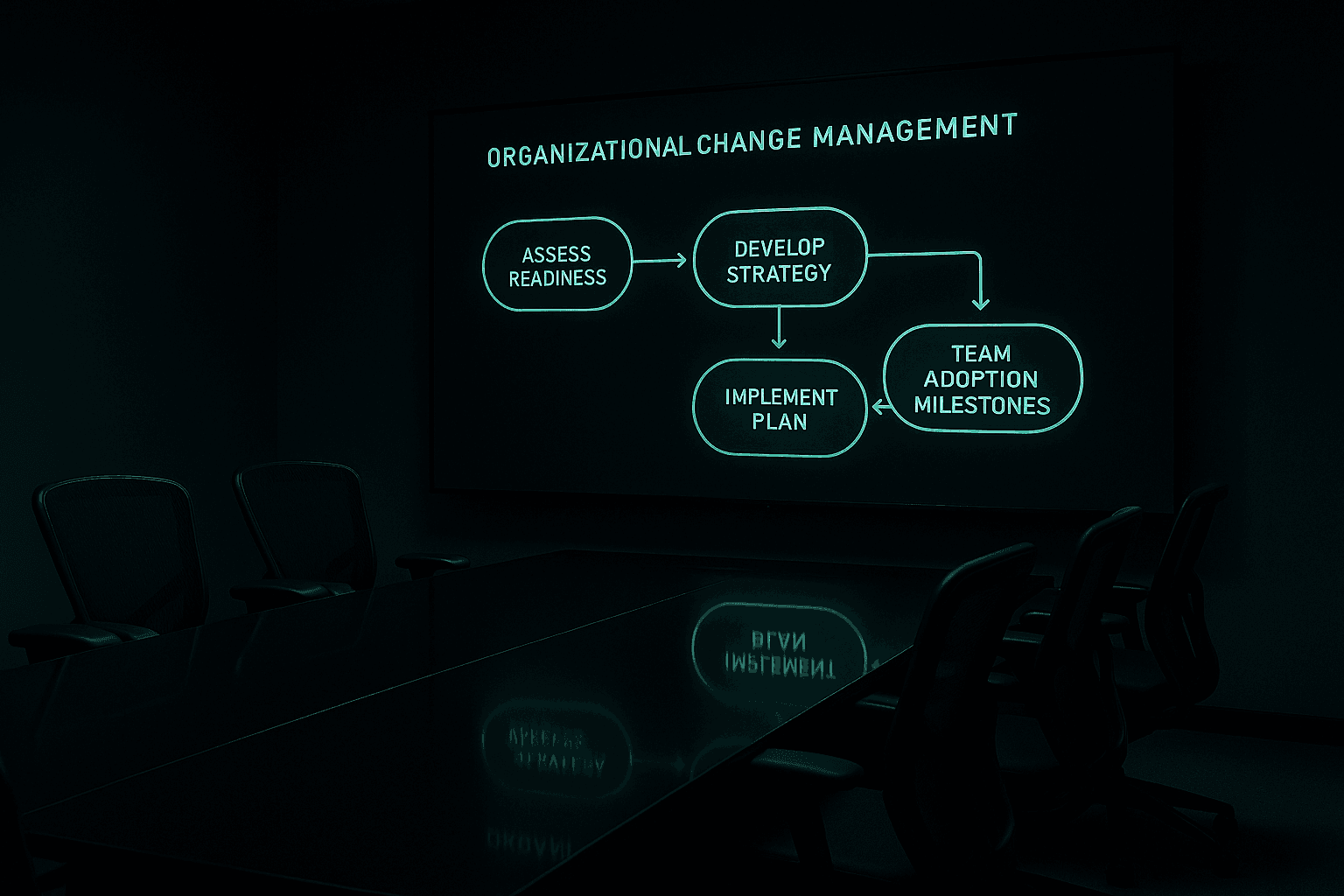

In our experience, effective AI organizational change management is not complicated. It is four things done consistently.

Stakeholder alignment sessions before build

Before the first technical decision, we run structured sessions with every group that will be affected. Not leadership only. The real users. We ask what is slowing them down, what they are skeptical about, and what success would look like for them. We document it and feed it back into the project plan. By the time the tool is ready, these people have been part of the process for months. They are not surprised. They are ready.

Role-by-role training plans

We build a training plan for each team that will use the tool, focused entirely on their specific workflows. A customer service rep gets different training than a finance analyst. Each plan includes: what the tool does for their role, a hands-on session with their actual work (not hypothetical examples), and a clear escalation path when something does not work.

Resistance mapping before launch

Before we go live, we build a simple map: who is likely to resist, why, and what we need to address. Usually it is two or three people with legitimate concerns. We address those concerns directly before launch, not after. This alone eliminates most rollout friction.

30-60-90 day adoption tracking

After launch, we track usage by role, not by account alone. We look at frequency, depth of use, and whether the tool is being used for the workflows it was built for. At 30 days, we identify who is not using it and why. At 60 days, we course-correct. At 90 days, we have a clear picture of real adoption versus what was planned.

The Before and After

Here is what a project without change management looks like: three months of build, a rushed rollout, usage at 20% after six weeks, quiet abandonment by month four. The technology worked. Nobody used it.

Here is what a project with change management looks like: three months of build, preceded by two months of stakeholder work, a phased rollout by team, usage tracking from day one, specific interventions for low-adoption groups, and a 90-day review that shows the tool embedded in daily workflow. The technology worked. People used it.

The difference is not the AI. The difference is whether the organization was ready to receive it.

This is not a soft, nice-to-have addition to an AI project. It is the difference between a project that delivers results and one that becomes a cautionary tale in your next budget meeting.

FAQ: What should change management cover in an AI rollout?

What should happen before launch?

Before launch, teams should align stakeholders, map likely resistance, define role-based training, and agree on the usage metrics that matter after go-live.

What should leaders measure after launch?

Leaders should measure real usage, frequency, workflow depth, and adoption by role at 30, 60, and 90 days.

What is the simplest way to improve adoption?

The simplest way to improve adoption is to show each role how the tool removes a specific pain in their daily work instead of training everyone the same way.

Ready to Build AI Your Team Uses?

If you are planning an AI project or trying to rescue one that has stalled, the people side needs as much attention as the technology side. We work with organizations at every stage of AI adoption to build the change management framework that makes rollouts stick.

Learn how we approach AI organizational change management and what it looks like to work with a team that treats adoption as a deliverable, not an afterthought. If you are earlier in the process, pair this with our guides on why AI initiatives stall and how to calculate AI ROI.

Get the weekly AI brief.

Read by CIOs and ops leaders. One insight per week.